OpenClaw is ON FIRE! It's gonna be everyone's new uber-PA. Just slur a command into WhatsApp then order another margarita by the pool, and have no fear...our lobster friend will do the rest. #winning

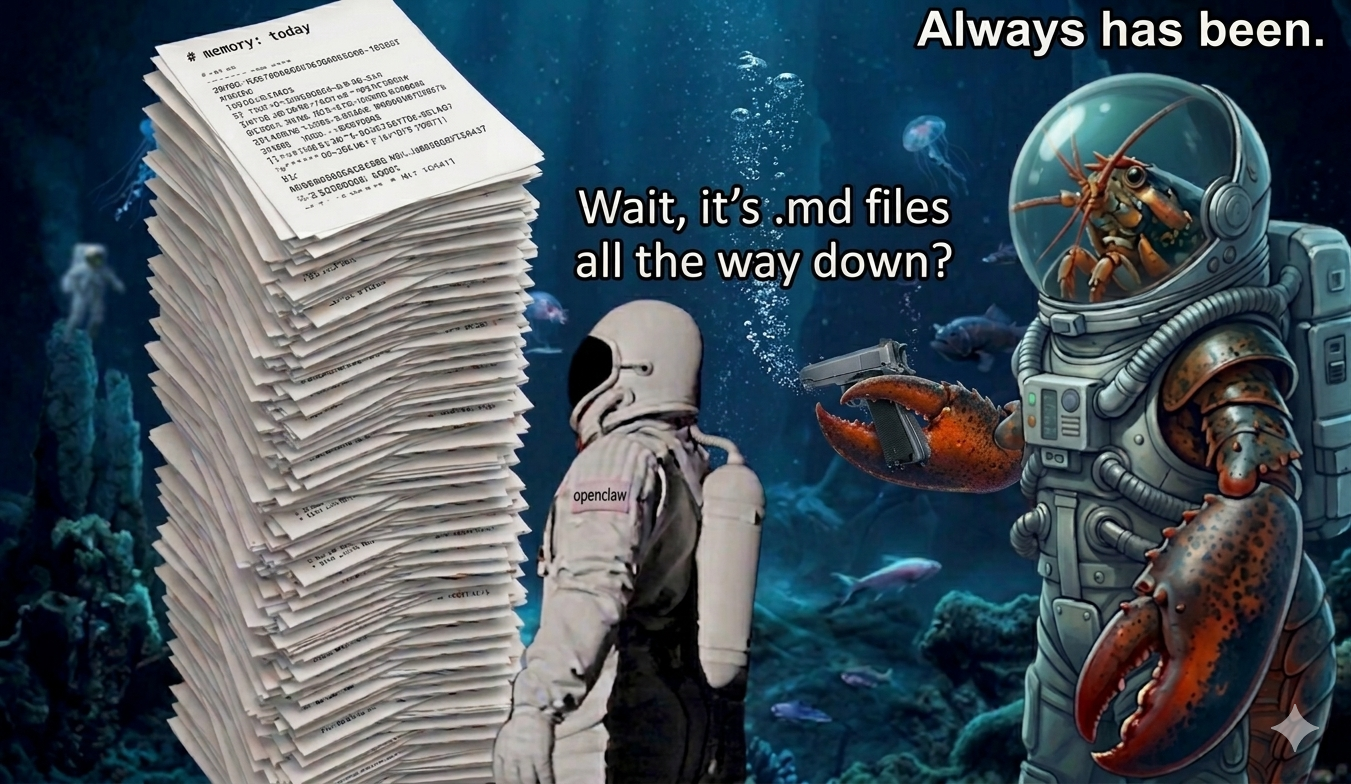

Problem: OpenClaw out of the box has a dirty secret...it's flat files all the way down.

"Why do I care?" I hear you say. "I have a digital lobster that can do stuff!"

Here's the thing. Agents have context windows. Context windows have limits. All those files need to be loaded into that window. When it fills up, the system compresses. A friend told me before I spun one up: make sure you know about the /compact command.

What could possibly go wrong?

Memory compression is lossy. And the cost compounds over time.

OpenClaw's entire history — every task, every decision, every learned context — lives in a folder of markdown files. When the context window fills up, the system compresses. When it compresses badly, the agent forgets things it shouldn't. When it forgets things, you wonder if you are the only one ordering doubles from your new friend at the pool bar.

This isn't just about lossy memory, this is about cost. As memories accumulate each one becomes a series of tokens. Each token costs money. As time goes by, every request, every prompt gets more and more expensive because all are tokens that are passed on every prompt, and every prompt costs more as each memory is added.

I want to be clear, I'm not coming down on Peter Steinberger here. OpenClaw as shipped is a simple powerful tool that is easy to set up and has no dependencies. Run a couple basic terminal commands and you've got a briny buddy at your behest. There's a reason OpenClaw has gone parabolic.

But if this is really going to be your permanent digital assistant, it needs better memory. Real persistence.

That's been my obsession for some time. I built CodeManager.rkp for exactly this reason.

So here's what we're doing: testing whether a Postgres backend with pgvector can replace the flat files and actually solve this.

If I'm right:

- Compaction should be reduced or even eliminated

- Token burn should go up far less quickly (adding an index layer later could help to even flatten this curve further)

- Recall should be better and drift should be reduced resulting in better answers and better work

- Reduced latency. Semantic search should mean faster response especially as time goes by

If I'm wrong, flat files will have proven they're good enough and I'll have wasted time on a database nobody needs.

The experiment

Two instances of OpenClaw. Identical tasks. Identical model. One running stock flat file memory. One running a Postgres backend with pgvector semantic search.

I'm measuring four things:

- Token burn per task

- Compaction frequency

- Latency

- Task completion quality

The test battery is inference-heavy — Playwright automation, vision analysis, image collection and classification. The kind of work that hammers context hard and fast.

Both will live in identical containers on a local headless linux box. Inference will come from a M1 Mac Studio with 32gb or RAM.

Phase one runs on Ollama with qwen2.5vl:7b across a local network. Phase two redlines both instances on llama.cpp Metal for 12 hours. Consumer hardware. Real constraints. No cloud lab.

The low ceiling is by design. Limited hardware and models should reveal more than it hides.

Why you should care

OpenClaw promises much and is delivering, but can we really rely on it out of the box?

The SOUL.md file in OpenClaw lays it out: "Each session, you wake up fresh. These files are your memory."

An agent that wakes up fresh every session and is then handed an increasingly taller stack of notes ain't gonna get smarter. It's gonna get more forgetful.

How many memories can you hold at once?

That's why we're doing this. To see if we can give OpenClaw a memory upgrade.

Grab a dark and stormy and settle in

I'll be posting as I progress: setup, methodology, the data, and whatever breaks along the way.